{

"image_width": 690,

"image_height": 920,

"face_model_version": "3.0",

"face_shape_set": [

{

"face_profile": [

{

"x": 294,

"y": 207

},

{

"x": 289,

"y": 216

},

{

"x": 286,

"y": 226

},

{

"x": 284,

"y": 236

},

{

"x": 283,

"y": 246

},

{

"x": 283,

"y": 256

},

{

"x": 284,

"y": 266

},

{

"x": 286,

"y": 276

},

{

"x": 289,

"y": 285

},

{

"x": 294,

"y": 294

},

{

"x": 301,

"y": 301

},

{

"x": 314,

"y": 306

},

{

"x": 327,

"y": 307

},

{

"x": 340,

"y": 306

},

{

"x": 353,

"y": 302

},

{

"x": 365,

"y": 296

},

{

"x": 374,

"y": 287

},

{

"x": 382,

"y": 276

},

{

"x": 387,

"y": 264

},

{

"x": 392,

"y": 251

},

{

"x": 396,

"y": 238

}

],

"left_eye": [

{

"x": 298,

"y": 208

},

{

"x": 301,

"y": 212

},

{

"x": 305,

"y": 214

},

{

"x": 309,

"y": 215

},

{

"x": 314,

"y": 216

},

{

"x": 313,

"y": 210

},

{

"x": 309,

"y": 207

},

{

"x": 303,

"y": 206

}

],

"right_eye": [

{

"x": 363,

"y": 229

},

{

"x": 358,

"y": 230

},

{

"x": 353,

"y": 229

},

{

"x": 347,

"y": 227

},

{

"x": 342,

"y": 224

},

{

"x": 348,

"y": 221

},

{

"x": 354,

"y": 221

},

{

"x": 360,

"y": 223

}

],

"left_eye_brow": [

{

"x": 296,

"y": 196

},

{

"x": 302,

"y": 197

},

{

"x": 308,

"y": 198

},

{

"x": 313,

"y": 200

},

{

"x": 319,

"y": 202

},

{

"x": 315,

"y": 195

},

{

"x": 309,

"y": 192

},

{

"x": 302,

"y": 192

}

],

"right_eye_brow": [

{

"x": 377,

"y": 221

},

{

"x": 369,

"y": 217

},

{

"x": 360,

"y": 213

},

{

"x": 350,

"y": 211

},

{

"x": 341,

"y": 208

},

{

"x": 351,

"y": 204

},

{

"x": 362,

"y": 206

},

{

"x": 372,

"y": 211

}

],

"mouth": [

{

"x": 296,

"y": 262

},

{

"x": 297,

"y": 269

},

{

"x": 299,

"y": 276

},

{

"x": 305,

"y": 281

},

{

"x": 315,

"y": 283

},

{

"x": 326,

"y": 282

},

{

"x": 335,

"y": 277

},

{

"x": 325,

"y": 269

},

{

"x": 315,

"y": 262

},

{

"x": 309,

"y": 261

},

{

"x": 305,

"y": 258

},

{

"x": 300,

"y": 259

},

{

"x": 299,

"y": 265

},

{

"x": 303,

"y": 269

},

{

"x": 307,

"y": 272

},

{

"x": 316,

"y": 275

},

{

"x": 325,

"y": 276

},

{

"x": 326,

"y": 272

},

{

"x": 317,

"y": 269

},

{

"x": 308,

"y": 265

},

{

"x": 304,

"y": 263

},

{

"x": 300,

"y": 262

}

],

"nose": [

{

"x": 311,

"y": 242

},

{

"x": 325,

"y": 220

},

{

"x": 319,

"y": 226

},

{

"x": 313,

"y": 231

},

{

"x": 307,

"y": 236

},

{

"x": 302,

"y": 243

},

{

"x": 306,

"y": 249

},

{

"x": 311,

"y": 252

},

{

"x": 318,

"y": 254

},

{

"x": 329,

"y": 253

},

{

"x": 327,

"y": 243

},

{

"x": 326,

"y": 235

},

{

"x": 326,

"y": 228

}

],

"left_pupil": [

{

"x": 310,

"y": 211

}

],

"right_pupil": [

{

"x": 357,

"y": 225

}

]

}

]

}

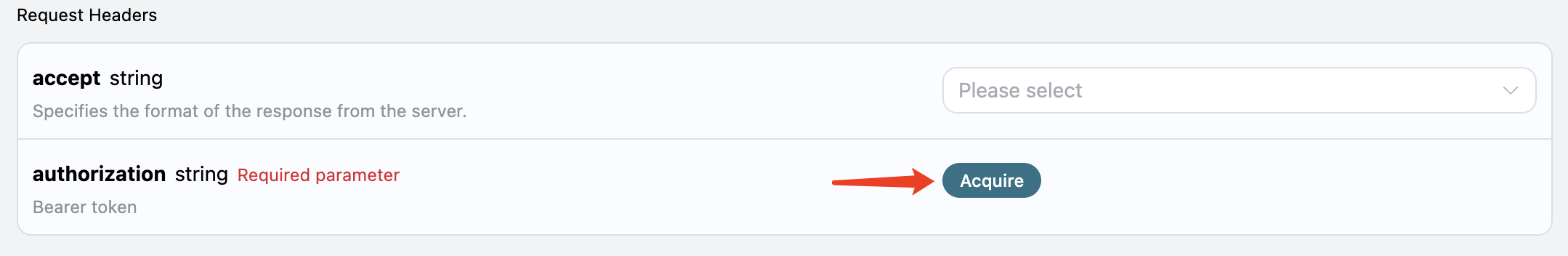

If you are not logged in or registered, you will be automatically redirected to the login page inviting you to register and log in. After logging in or registering, you will be automatically returned to the current page.

Upon your first application, there will be a free quota provided, allowing you to use the API for free.

If you are not logged in or registered, you will be automatically redirected to the login page inviting you to register and log in. After logging in or registering, you will be automatically returned to the current page.

Upon your first application, there will be a free quota provided, allowing you to use the API for free.