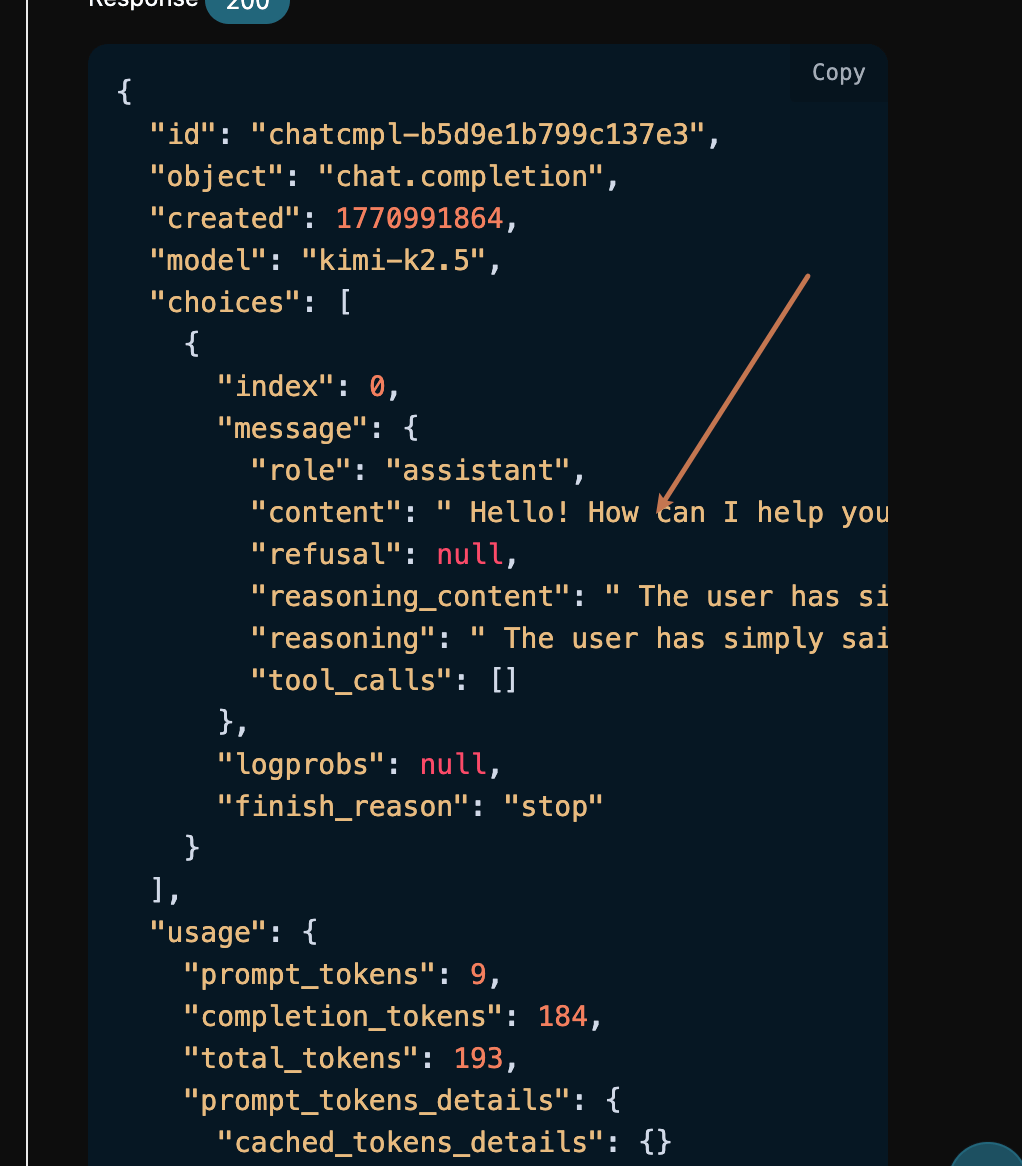

data: {"id": "chatcmpl-952dd5e75583c4d1", "object": "chat.completion.chunk", "created": 1770992031, "model": "kimi-k2.5", "system_fingerprint": null, "choices": [{"index": 0, "delta": {"content": "", "role": "assistant"}, "logprobs": null, "finish_reason": null}], "usage": null}

data: {"id": "chatcmpl-952dd5e75583c4d1", "object": "chat.completion.chunk", "created": 1770992031, "model": "kimi-k2.5", "system_fingerprint": null, "choices": [{"index": 0, "delta": {"reasoning_content": " The", "reasoning": " The"}, "logprobs": null, "finish_reason": null}], "usage": null}

data: {"id": "chatcmpl-952dd5e75583c4d1", "object": "chat.completion.chunk", "created": 1770992031, "model": "kimi-k2.5", "system_fingerprint": null, "choices": [{"index": 0, "delta": {"reasoning_content": " user said \"Hello\". This is a simple greeting", "reasoning": " user said \"Hello\". This is a simple greeting"}, "logprobs": null, "finish_reason": null}], "usage": null}

data: {"id": "chatcmpl-952dd5e75583c4d1", "object": "chat.completion.chunk", "created": 1770992031, "model": "kimi-k2.5", "system_fingerprint": null, "choices": [{"index": 0, "delta": {"reasoning_content": ". I should respond in a friendly, welcoming manner", "reasoning": ". I should respond in a friendly, welcoming manner"}, "logprobs": null, "finish_reason": null}], "usage": null}

data: {"id": "chatcmpl-952dd5e75583c4d1", "object": "chat.completion.chunk", "created": 1770992031, "model": "kimi-k2.5", "system_fingerprint": null, "choices": [{"index": 0, "delta": {"reasoning_content": ". Since this is the start of a conversation,", "reasoning": ". Since this is the start of a conversation,"}, "logprobs": null, "finish_reason": null}], "usage": null}

data: {"id": "chatcmpl-952dd5e75583c4d1", "object": "chat.completion.chunk", "created": 1770992031, "model": "kimi-k2.5", "system_fingerprint": null, "choices": [{"index": 0, "delta": {"reasoning_content": " I should ask how I can help them today.\n\n", "reasoning": " I should ask how I can help them today.\n\n"}, "logprobs": null, "finish_reason": null}], "usage": null}

data: {"id": "chatcmpl-952dd5e75583c4d1", "object": "chat.completion.chunk", "created": 1770992031, "model": "kimi-k2.5", "system_fingerprint": null, "choices": [{"index": 0, "delta": {"reasoning_content": "Let me craft a response:\n- Acknowledge the", "reasoning": "Let me craft a response:\n- Acknowledge the"}, "logprobs": null, "finish_reason": null}], "usage": null}

data: {"id": "chatcmpl-952dd5e75583c4d1", "object": "chat.completion.chunk", "created": 1770992031, "model": "kimi-k2.5", "system_fingerprint": null, "choices": [{"index": 0, "delta": {"reasoning_content": " greeting\n- Offer assistance\n- Keep it warm", "reasoning": " greeting\n- Offer assistance\n- Keep it warm"}, "logprobs": null, "finish_reason": null}], "usage": null}

data: {"id": "chatcmpl-952dd5e75583c4d1", "object": "chat.completion.chunk", "created": 1770992031, "model": "kimi-k2.5", "system_fingerprint": null, "choices": [{"index": 0, "delta": {"reasoning_content": " and professional\n\nSomething like: \"Hello! How", "reasoning": " and professional\n\nSomething like: \"Hello! How"}, "logprobs": null, "finish_reason": null}], "usage": null}

data: {"id": "chatcmpl-952dd5e75583c4d1", "object": "chat.completion.chunk", "created": 1770992031, "model": "kimi-k2.5", "system_fingerprint": null, "choices": [{"index": 0, "delta": {"reasoning_content": " can I help you today?\" or \"Hi there", "reasoning": " can I help you today?\" or \"Hi there"}, "logprobs": null, "finish_reason": null}], "usage": null}

data: {"id": "chatcmpl-952dd5e75583c4d1", "object": "chat.completion.chunk", "created": 1770992031, "model": "kimi-k2.5", "system_fingerprint": null, "choices": [{"index": 0, "delta": {"reasoning_content": "! What can I do for you?\"\n\nActually", "reasoning": "! What can I do for you?\"\n\nActually"}, "logprobs": null, "finish_reason": null}], "usage": null}

data: {"id": "chatcmpl-952dd5e75583c4d1", "object": "chat.completion.chunk", "created": 1770992031, "model": "kimi-k2.5", "system_fingerprint": null, "choices": [{"index": 0, "delta": {"reasoning_content": ", looking at the context, this seems to be", "reasoning": ", looking at the context, this seems to be"}, "logprobs": null, "finish_reason": null}], "usage": null}

data: {"id": "chatcmpl-952dd5e75583c4d1", "object": "chat.completion.chunk", "created": 1770992031, "model": "kimi-k2.5", "system_fingerprint": null, "choices": [{"index": 0, "delta": {"reasoning_content": " a general conversation starter. I'll keep it simple and", "reasoning": " a general conversation starter. I'll keep it simple and"}, "logprobs": null, "finish_reason": null}], "usage": null}

data: {"id": "chatcmpl-952dd5e75583c4d1", "object": "chat.completion.chunk", "created": 1770992031, "model": "kimi-k2.5", "system_fingerprint": null, "choices": [{"index": 0, "delta": {"reasoning_content": " open-ended to encourage them to share what they need", "reasoning": " open-ended to encourage them to share what they need"}, "logprobs": null, "finish_reason": null}], "usage": null}

data: {"id": "chatcmpl-952dd5e75583c4d1", "object": "chat.completion.chunk", "created": 1770992031, "model": "kimi-k2.5", "system_fingerprint": null, "choices": [{"index": 0, "delta": {"content": " Hello! How can I help", "reasoning_content": " help with. ", "reasoning": " help with. "}, "logprobs": null, "finish_reason": null}], "usage": null}

data: {"id": "chatcmpl-952dd5e75583c4d1", "object": "chat.completion.chunk", "created": 1770992031, "model": "kimi-k2.5", "system_fingerprint": null, "choices": [{"index": 0, "delta": {"content": " you today?"}, "logprobs": null, "finish_reason": "stop"}], "usage": null}

data: {"id": "chatcmpl-952dd5e75583c4d1", "object": "chat.completion.chunk", "created": 1770992031, "model": "kimi-k2.5", "system_fingerprint": null, "choices": [], "usage": {"prompt_tokens": 9, "completion_tokens": 135, "total_tokens": 144, "prompt_tokens_details": {"cached_tokens_details": {}}, "completion_tokens_details": {}}}

data: [DONE]

If you are not logged in or registered, you will be automatically redirected to the login page inviting you to register and log in. After logging in or registering, you will automatically return to the current page.

During the first application, there will be a free quota provided, allowing you to use the API for free.

If you are not logged in or registered, you will be automatically redirected to the login page inviting you to register and log in. After logging in or registering, you will automatically return to the current page.

During the first application, there will be a free quota provided, allowing you to use the API for free.