Application Process

To use the Claude Chat Completion API, you can first visit the Claude Chat Completion API page and click the “Acquire” button to obtain the credentials needed for the request: If you are not logged in or registered, you will be automatically redirected to the login page inviting you to register and log in. After logging in or registering, you will be automatically returned to the current page.

When applying for the first time, there will be a free quota provided, allowing you to use the API for free.

If you are not logged in or registered, you will be automatically redirected to the login page inviting you to register and log in. After logging in or registering, you will be automatically returned to the current page.

When applying for the first time, there will be a free quota provided, allowing you to use the API for free.

Basic Usage

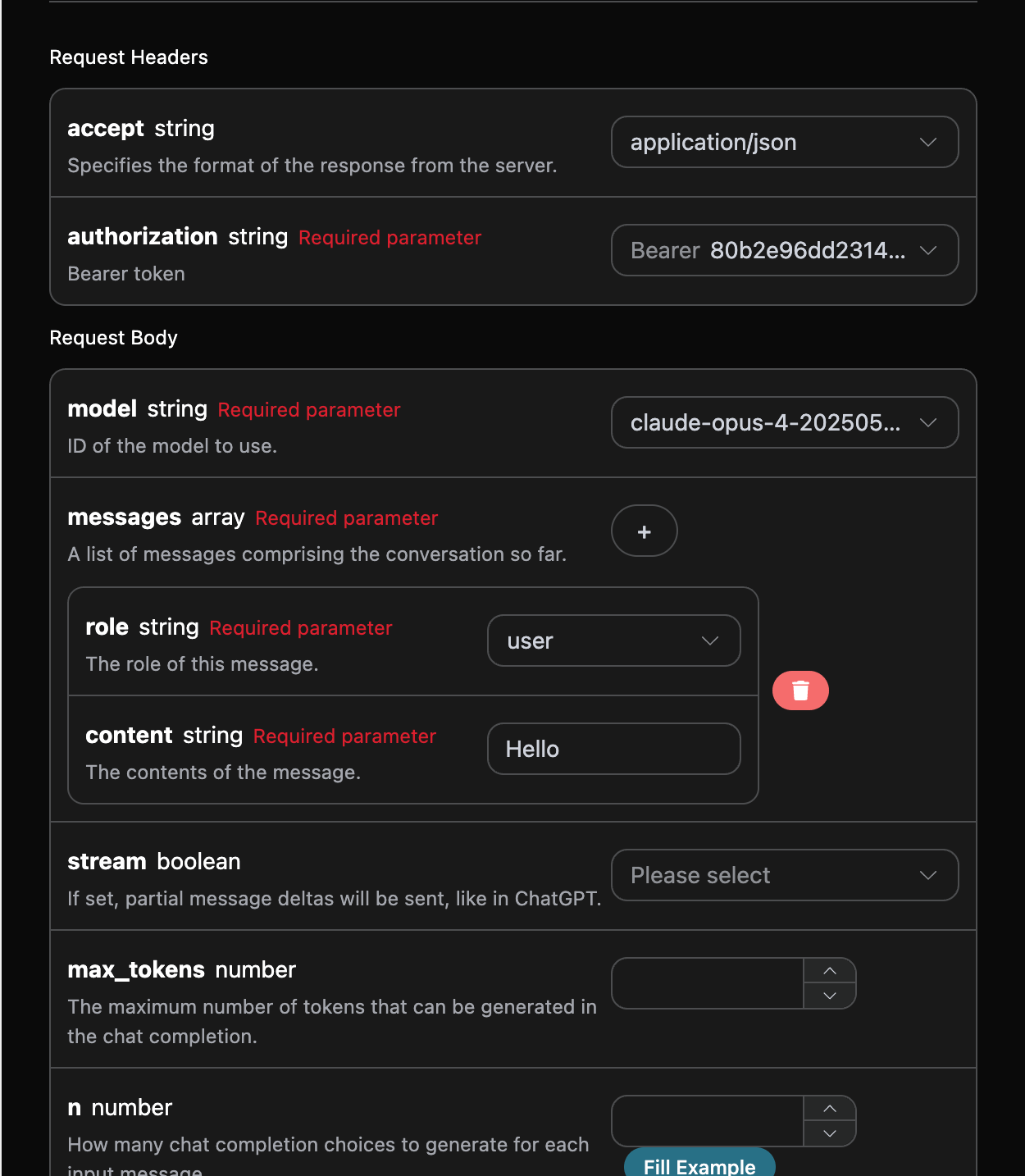

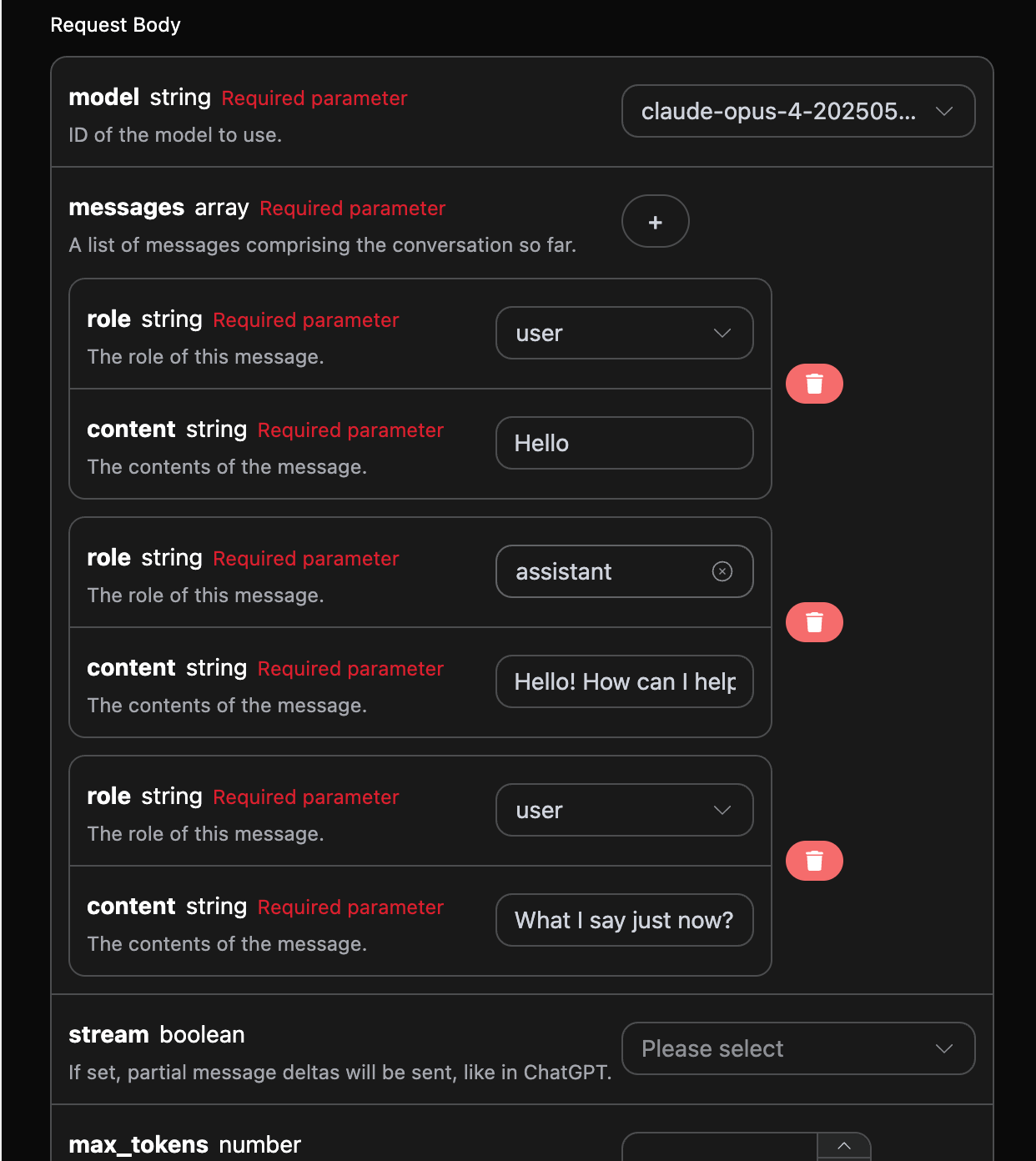

Next, you can fill in the corresponding content on the interface, as shown in the figure:

authorization, which can be selected directly from the dropdown list. The other parameter is model, which is the model category we choose to use from the Claude official website. Here we mainly have 20 types of models; details can be found in the models we provide. The last parameter is messages, which is an array of our input questions. It is an array that allows multiple questions to be uploaded simultaneously, with each question containing role and content. The role indicates the role of the questioner, and we provide three identities: user, assistant, and system. The other content is the specific content of our question.

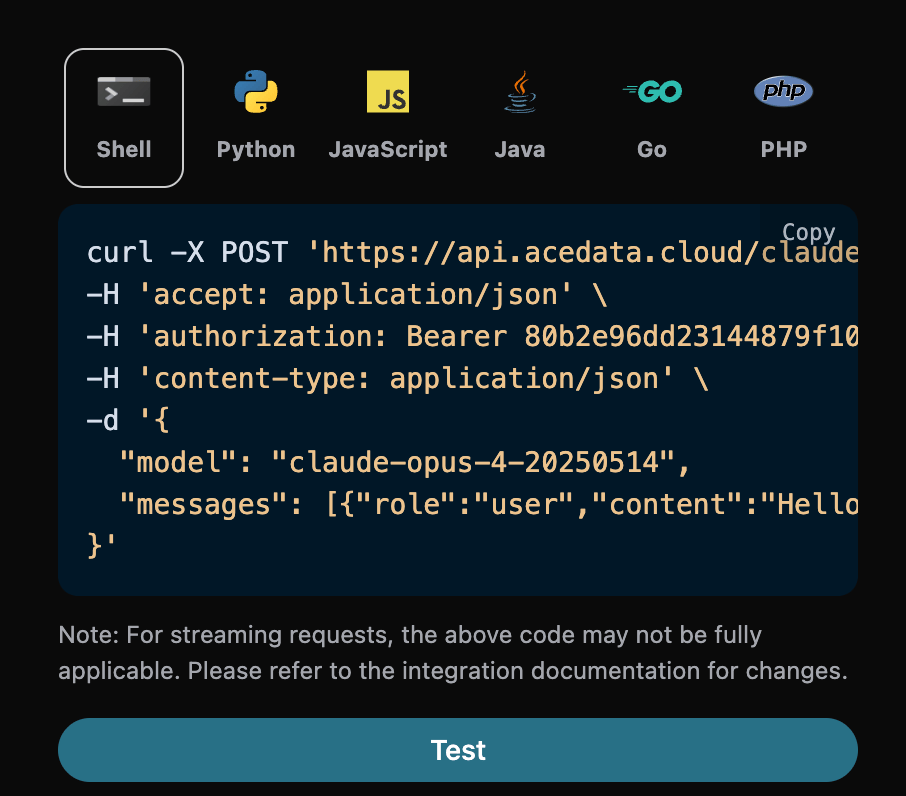

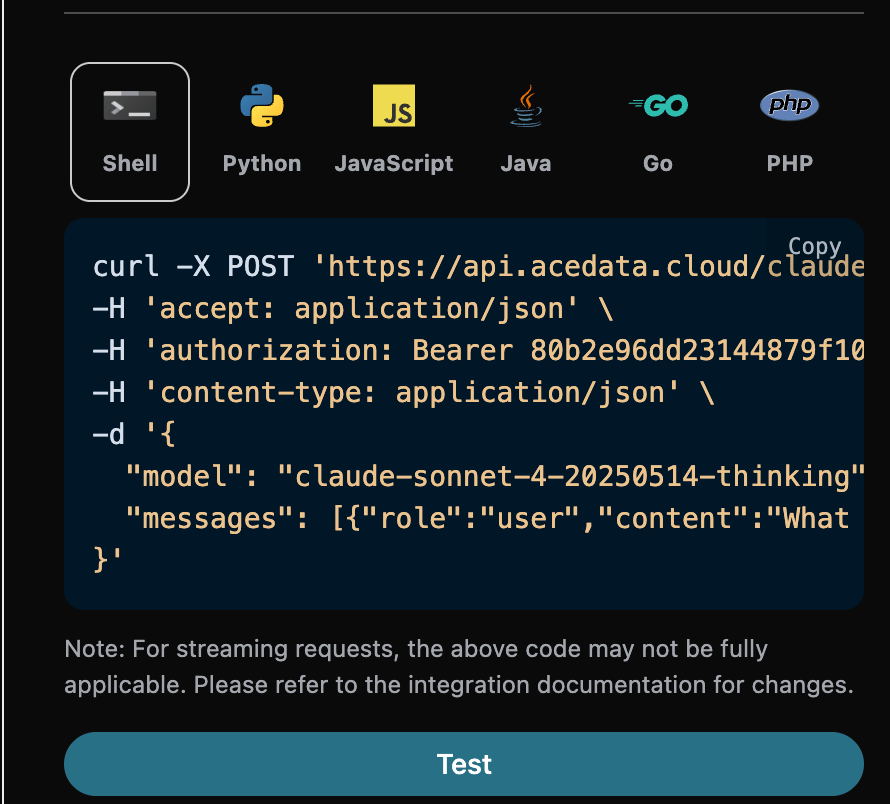

You can also notice that there is corresponding code generation on the right side; you can copy the code to run directly or click the “Try” button for testing.

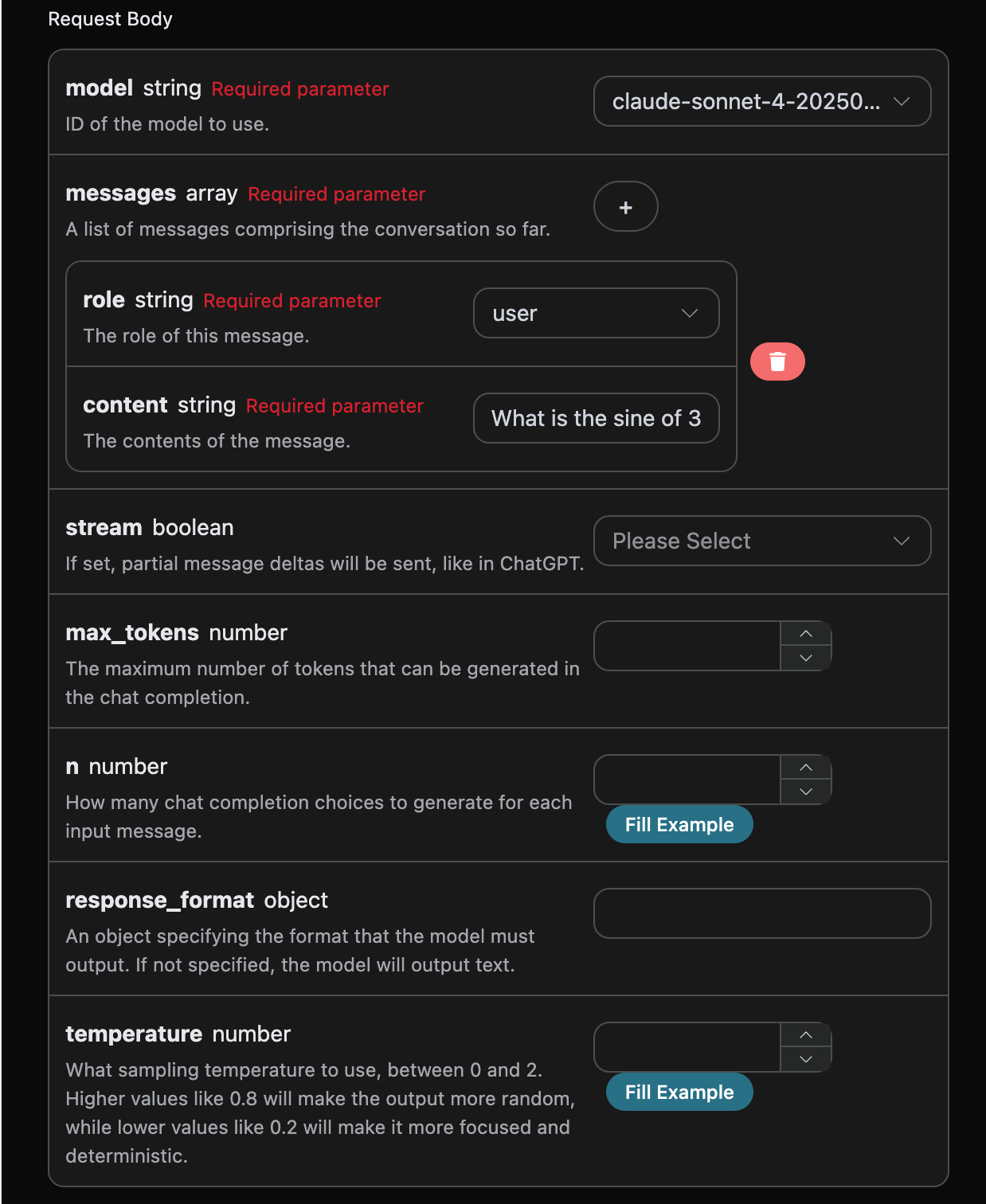

Common optional parameters:

max_tokens: Limits the maximum number of tokens for a single response.temperature: Generates randomness, between 0-2, with larger values being more divergent.n: How many candidate responses to generate at once.response_format: Sets the return format.

id: The ID generated for this dialogue task, used to uniquely identify this dialogue task.model: The selected Claude official model.choices: The response information provided by Claude for the question.usage: Statistics on the tokens used for this Q&A.

choices contains Claude’s response information, and the choices inside it provides the specific information of Claude’s answer, as shown in the figure.

content field inside choices contains the specific content of Claude’s reply.

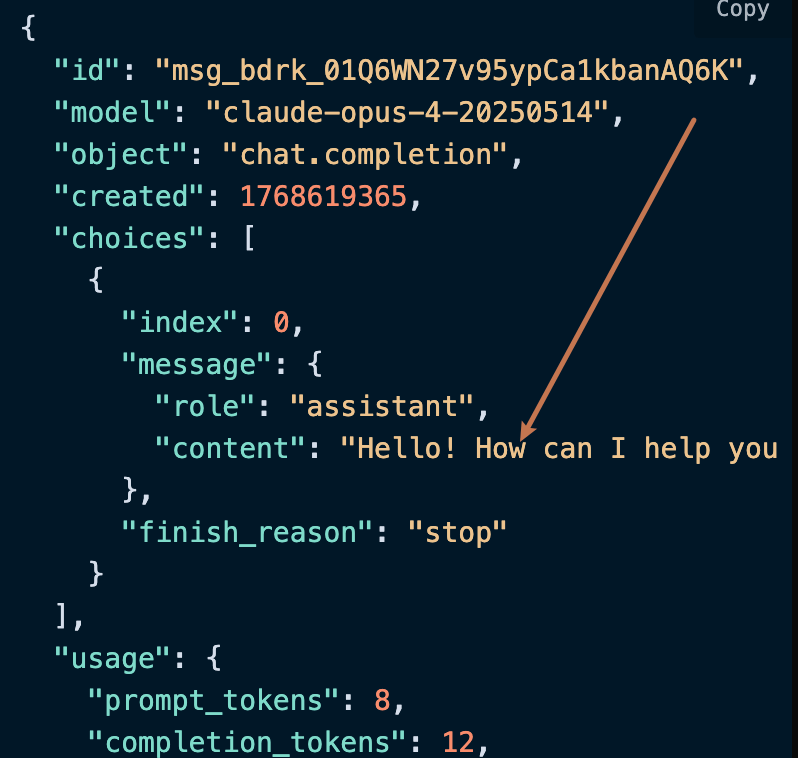

Streaming Response

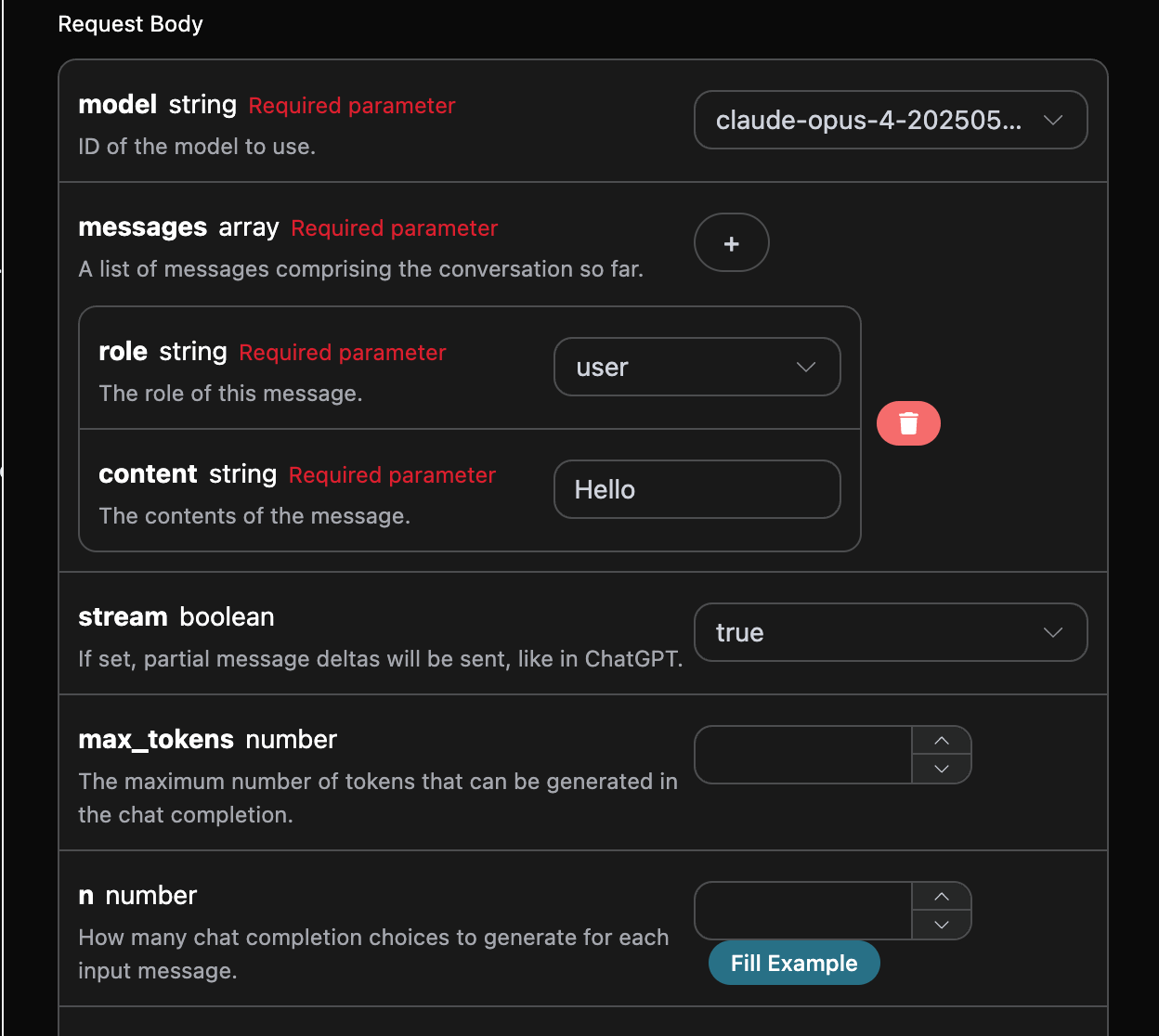

This interface also supports streaming responses, which is very useful for web integration, allowing the webpage to achieve a character-by-character display effect. If you want to return responses in a streaming manner, you can change thestream parameter in the request header to true.

Modify as shown in the figure, but the calling code needs to have corresponding changes to support streaming responses.

stream to true, the API will return the corresponding JSON data line by line, and we need to make corresponding modifications at the code level to obtain the line-by-line results.

Python sample calling code:

data in the response, and the choices in data are the latest response content, consistent with the content introduced above. The choices are the newly added response content, which you can use to connect to your system. At the same time, the end of the streaming response is determined based on the content of data. If the content is [DONE], it indicates that the streaming response has completely ended. The returned data result has multiple fields, described as follows:

id, the ID generated for this dialogue task, used to uniquely identify this dialogue task.model, the Claude model selected from the official website.choices, the response information provided by Claude for the question.

Multi-turn Dialogue

If you want to integrate multi-turn dialogue functionality, you need to upload multiple question words in themessages field. The specific examples of multiple question words are shown in the image below:

choices is consistent with the basic usage content, which includes the specific content of Claude’s responses to multiple dialogues, allowing for answers to corresponding questions based on multiple dialogue contents.

Deep Thinking Model

The claude-opus-4-20250514-thinking and claude-sonnet-4-20250514-thinking models are different from other models in that they can perform deep thinking based on the question words to provide answers, and return the results of the thinking process to you. This article will demonstrate the deep thinking functionality through a specific example. Next, you can fill in the corresponding content on the Claude Chat Completion API interface, as shown in the figure:

choices is obtained after deep thinking, and it also provides relevant reasoning process content, where reasoning_content in content indicates the model’s thinking process. The response information in choices needs to be rendered using markdown syntax to achieve the best experience, which also reflects the powerful advantages of our model’s networking capabilities.

Visual Model

The claude-sonnet-4-20250514 is a multimodal large language model developed by Claude, which adds visual understanding capabilities based on claude-4. This model can process both text and image inputs simultaneously, achieving cross-modal understanding and generation. The text processing using the claude-sonnet-4-20250514 model is consistent with the basic usage content mentioned above. Below is a brief introduction on how to use the model’s image processing capabilities. The image processing capability of the claude-sonnet-4-20250514 model is mainly achieved by adding atype field to the original content, which indicates whether the uploaded content is text or an image, thus utilizing the image processing capabilities of the claude-sonnet-4-20250514 model. The following mainly discusses how to call this functionality using Curl and Python.

- Curl Script Method

- Python Script Method

Error Handling

When calling the API, if an error occurs, the API will return the corresponding error code and message. For example:400 token_mismatched: Bad request, possibly due to missing or invalid parameters.400 api_not_implemented: Bad request, possibly due to missing or invalid parameters.401 invalid_token: Unauthorized, invalid or missing authorization token.429 too_many_requests: Too many requests, you have exceeded the rate limit.500 api_error: Internal server error, something went wrong on the server.