Application Process

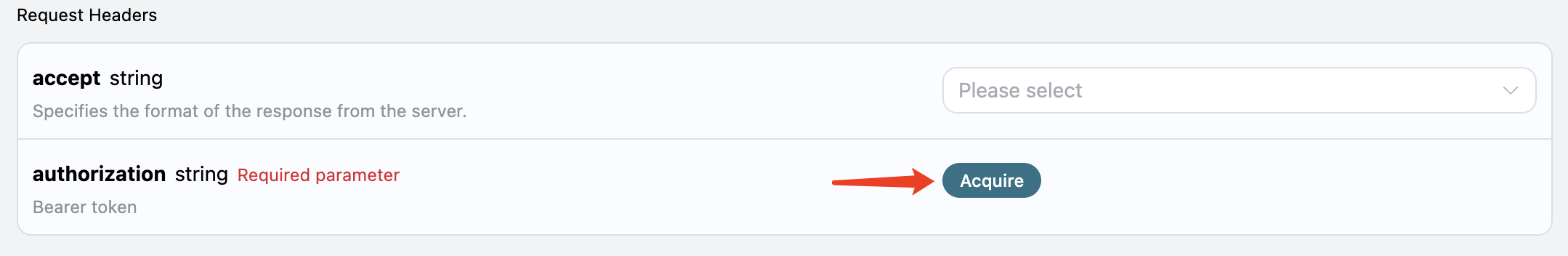

To use the API, you need to first apply for the corresponding service on the Wan Videos Generation API page. After entering the page, click the “Acquire” button, as shown in the image: If you are not logged in or registered, you will be automatically redirected to the login page inviting you to register and log in. After logging in or registering, you will be automatically returned to the current page.

There will be a free quota offered for the first application, allowing you to use the API for free.

If you are not logged in or registered, you will be automatically redirected to the login page inviting you to register and log in. After logging in or registering, you will be automatically returned to the current page.

There will be a free quota offered for the first application, allowing you to use the API for free.

Basic Usage

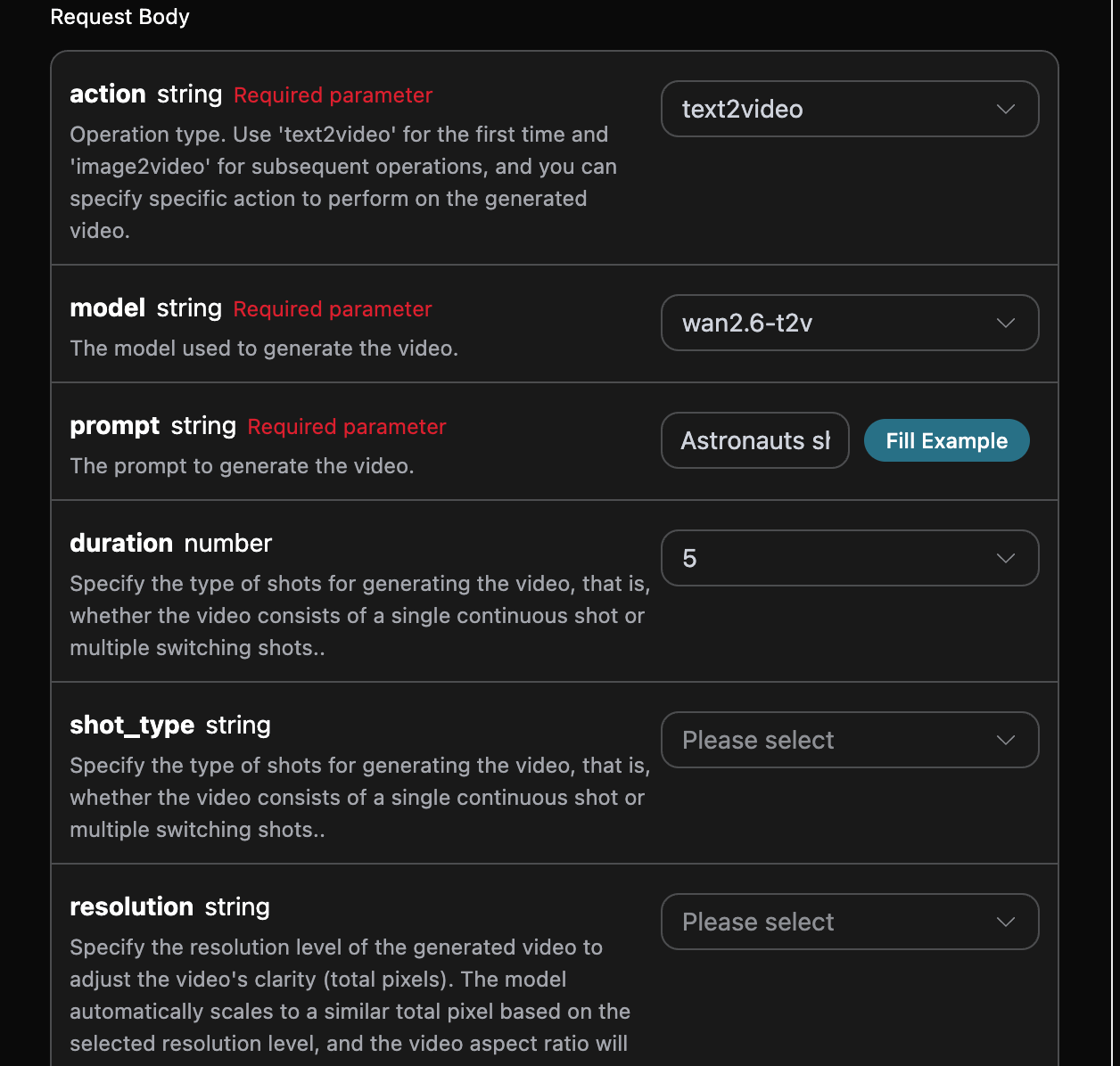

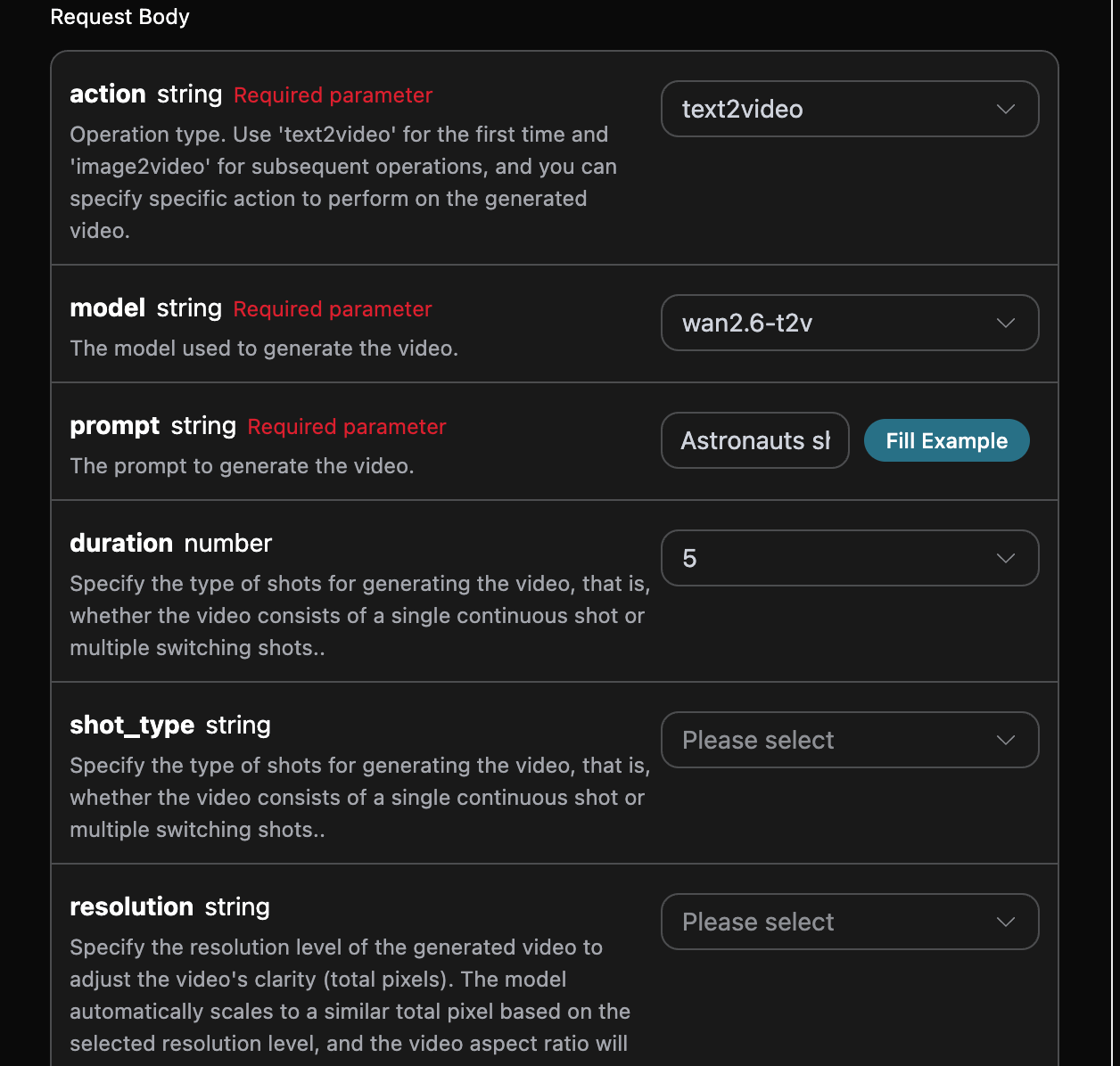

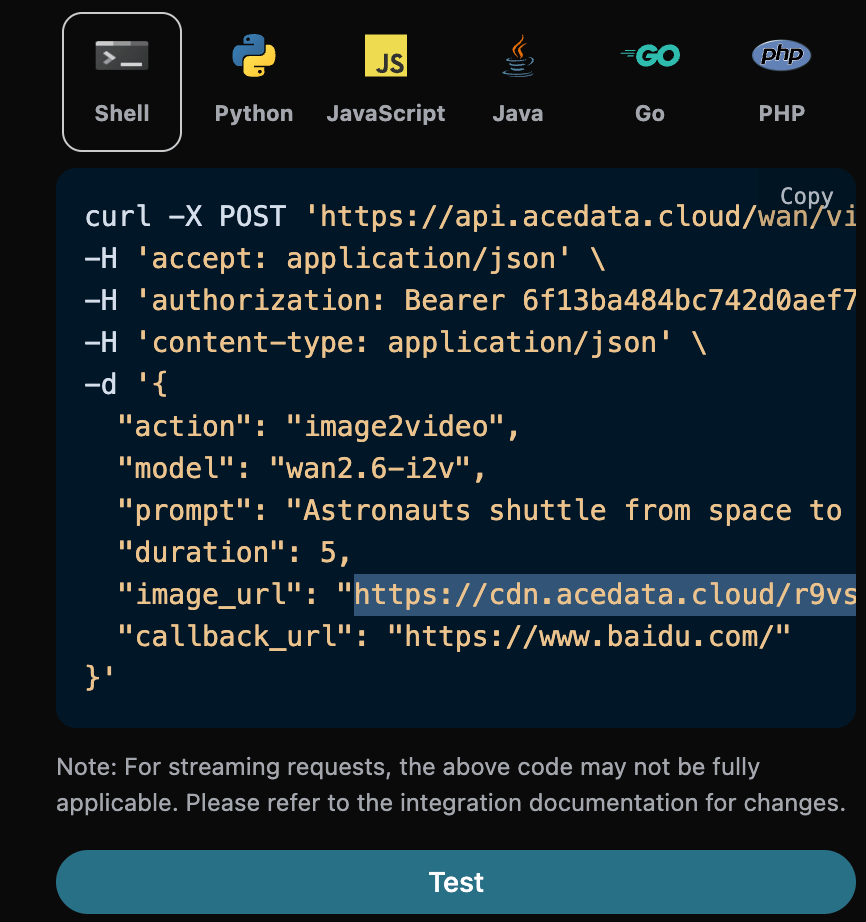

First, understand the basic usage method, which involves inputting the promptprompt, the action action, the first frame reference image image_url, and the model model to obtain the processed result. You first need to simply pass a field action, with the value set to text2video. It mainly includes two types of actions: text-to-video (text2video) and image-to-video (image2video). Then, we also need to input the model model, which currently mainly includes wan2.6-i2v, wan2.6-r2v, wan2.6-i2v-flash, and wan2.6-t2v. The specific content is as follows:

accept: the format of the response result you want to receive, filled in asapplication/json, which means JSON format.authorization: the key to call the API, which can be directly selected after application.

model: the model for generating the video, mainly includingwan2.6-i2v,wan2.6-r2v,wan2.6-i2v-flash, andwan2.6-t2v.action: the action for this video generation task, mainly including three actions: text-to-video (text2video), image-to-video (image2video). When it is text-to-video, currently only the modelwan2.6-t2vis supported. When it is image-to-video, currently only the modelswan2.6-i2v,wan2.6-r2v, andwan2.6-i2v-flashare supported.image_url: when selecting the image-to-video actionimage2video, it is necessary to upload the first frame reference image link. Currently, only the modelswan2.6-i2vandwan2.6-i2v-flashare supported.reference_video_urls: optional for image-to-video, specifies the reference video links for generation. Currently, only the modelwan2.6-r2vis supported.size: specifies the resolution of the generated video, in the format of width*height. The default value and available enumerated values for this parameter depend on the model parameter. For specific rules, please refer to the official documentation.duration: the duration of the video generation, mainly supporting 5, 10, 15.shot_type: optional, specifies the type of shot for the generated video, i.e., whether the video consists of a continuous shot or multiple switching shots. Effective condition: only effective when “prompt_extend”: true. Parameter priority: shot_type > prompt. For example, if shot_type is set to “single”, even if the prompt contains “generate multi-shot video”, the model will still output a single-shot video. For specific rules, please refer to the official documentation.negative_prompt: optional, reverse prompt words used to describe content that you do not want to see in the video frame, which can limit the video frame. Supports both Chinese and English, with a length not exceeding 500 characters; excess parts will be automatically truncated. Example values: low resolution, errors, worst quality, low quality, incomplete, extra fingers, poor proportions, etc.resolution: specifies the resolution level of the generated video, used to adjust the clarity of the video (total pixels). The model will automatically scale to a similar total pixel count based on the selected resolution level, and the video aspect ratio will try to maintain consistency with the aspect ratio of the input image img_url. For more details, please refer to the official documentation.audio_url: the URL of the audio file, which the model will use to generate the video. For usage, refer to the official documentation.audio: whether to generate a video with sound. Parameter priority: audio > audio_url. When audio=false, even if audio_url is passed in, the output will still be a silent video, and billing will be calculated as a silent video. The default value is true.prompt_extend: whether to enable intelligent rewriting of the prompt. When enabled, a large model will intelligently rewrite the input prompt. The effect of generation is significantly improved for shorter prompts, but it will increase processing time. The default value is true.prompt: prompt words.callback_url: the URL to which the results need to be returned.

success: the status of the video generation task at this time.task_id: the ID of the video generation task at this time.video_url: the video link of the video generation task at this time.state: the status of the video generation task at this time.

video_url.

Additionally, if you want to generate the corresponding integration code, you can directly copy it, for example, the CURL code is as follows:

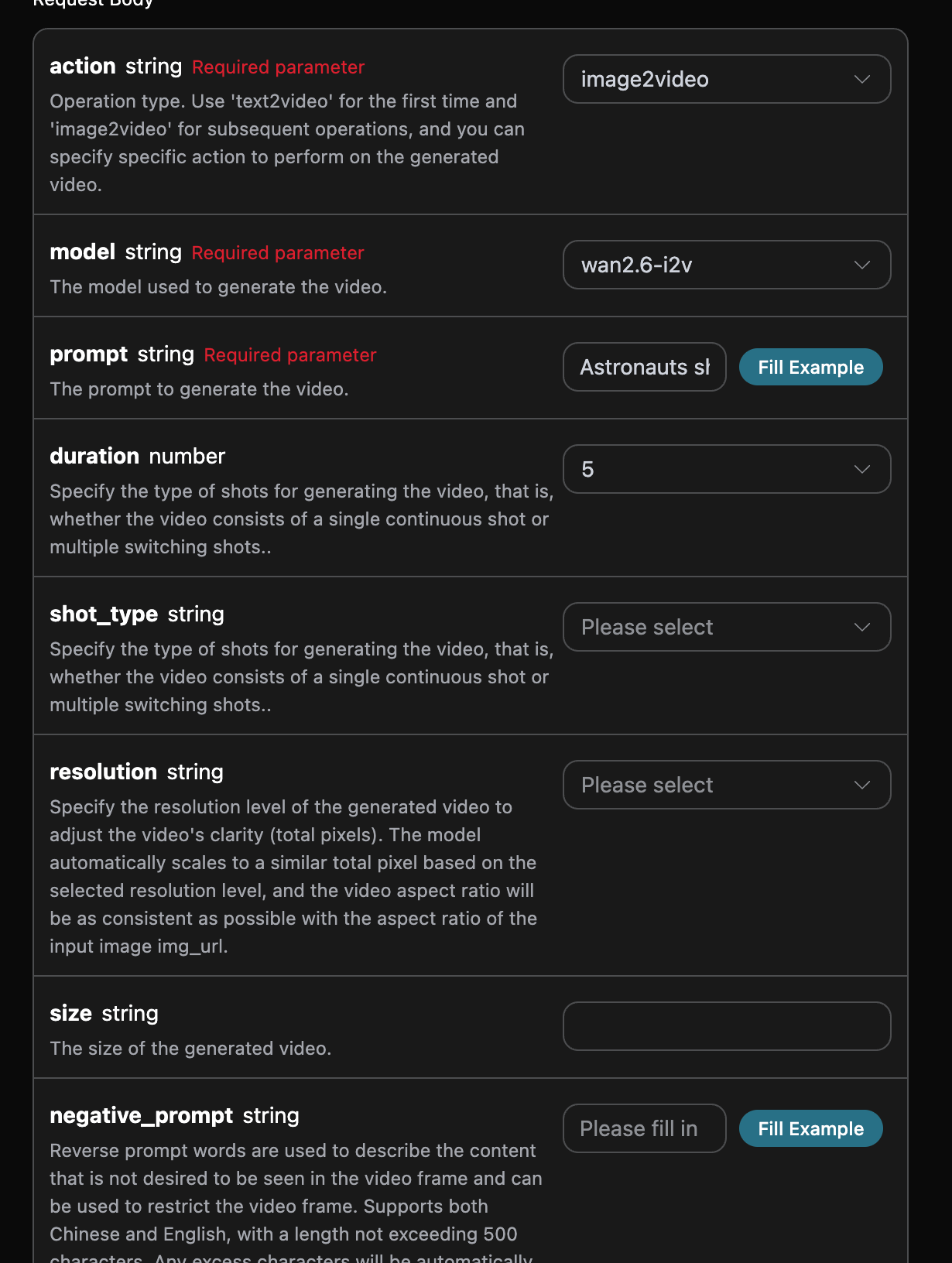

Image-to-Video Functionality

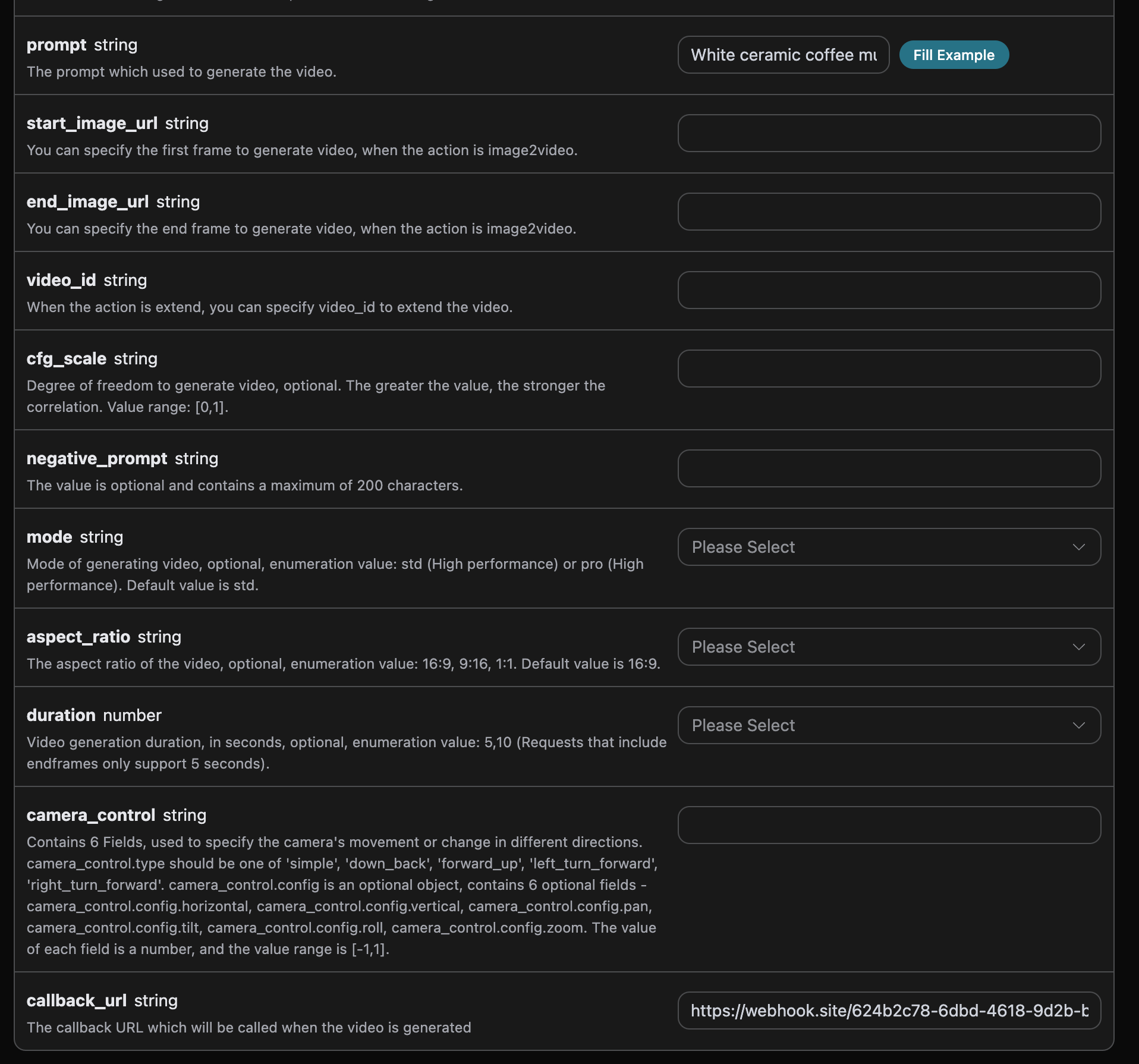

If you want to generate a video based on a reference image or reference video, you can set the parameteraction to image2video, and input the required reference image link or reference video link. Next, you must fill in the prompt words needed for the next step to customize the generated video, specifying the following content:

model: The model for generating the video, mainly includingwan2.6-i2v,wan2.6-r2v,wan2.6-i2v-flash,wan2.6-t2vmodels.image_url: When selecting the image-to-video actionimage2video, you must upload the link to the first frame reference image, currently only supporting modelswan2.6-i2v,wan2.6-i2v-flash.reference_video_urls: Optional when generating video from images, specify the reference video link for generation, currently only supporting modelwan2.6-r2v.prompt: Prompt words.

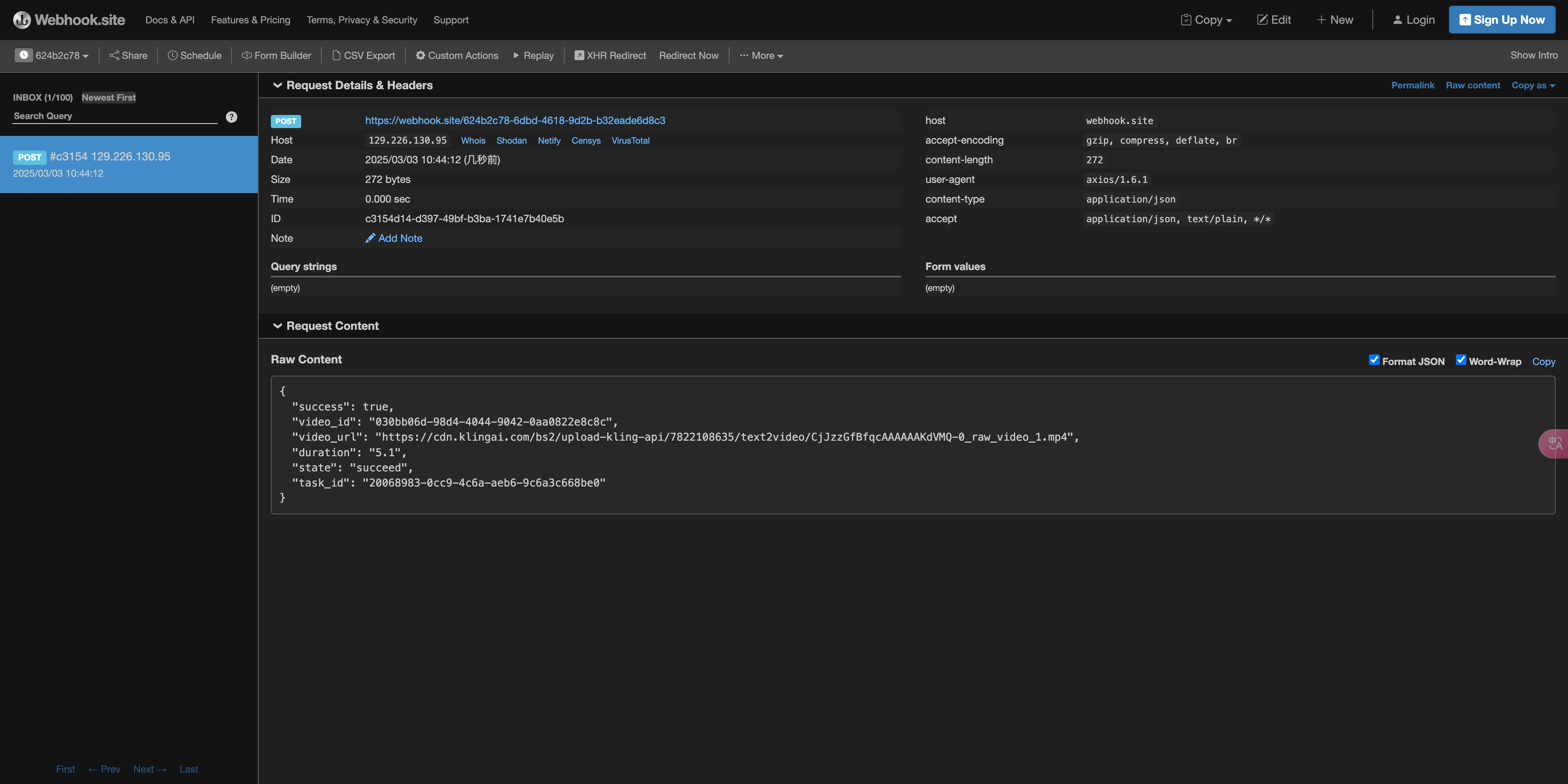

Asynchronous Callback

Since the time taken by the Wan Videos Generation API is relatively long, approximately 1-2 minutes, if the API does not respond for a long time, the HTTP request will keep the connection open, leading to additional system resource consumption. Therefore, this API also provides support for asynchronous callbacks. The overall process is: when the client initiates a request, an additionalcallback_url field is specified. After the client initiates the API request, the API will immediately return a result containing a task_id field information, representing the current task ID. When the task is completed, the generated video result will be sent to the client-specified callback_url in the form of a POST JSON, which also includes the task_id field, allowing the task result to be associated by ID.

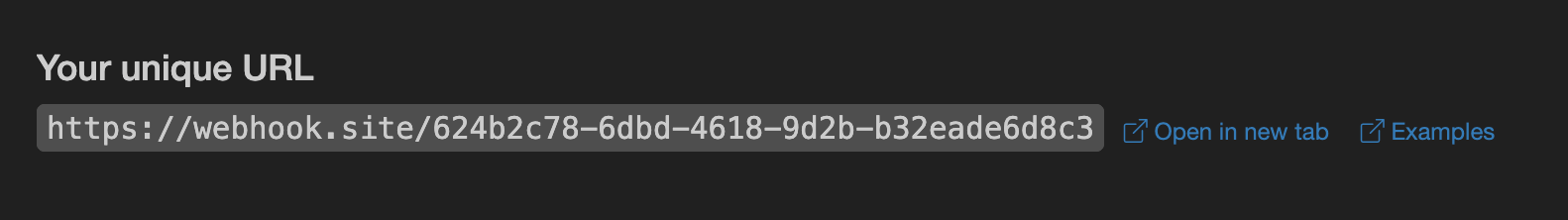

Let’s understand how to operate specifically through an example.

First, the Webhook callback is a service that can receive HTTP requests, and developers should replace it with the URL of their own built HTTP server. For demonstration purposes, a public Webhook sample site https://webhook.site/ is used, and opening this site will provide a Webhook URL, as shown in the image:

Copy this URL, and it can be used as a Webhook. The sample here is

Copy this URL, and it can be used as a Webhook. The sample here is https://webhook.site/624b2c78-6dbd-4618-9d2b-b32eade6d8c3.

Next, we can set the callback_url field to the above Webhook URL, while filling in the corresponding parameters, as shown in the image:

https://webhook.site/624b2c78-6dbd-4618-9d2b-b32eade6d8c3, as shown in the image:

The content is as follows:

The content is as follows:

task_id field, and the other fields are similar to the above text, allowing the task to be associated through this field.

Error Handling

When calling the API, if an error occurs, the API will return the corresponding error code and message. For example:400 token_mismatched: Bad request, possibly due to missing or invalid parameters.400 api_not_implemented: Bad request, possibly due to missing or invalid parameters.401 invalid_token: Unauthorized, invalid or missing authorization token.429 too_many_requests: Too many requests, you have exceeded the rate limit.500 api_error: Internal server error, something went wrong on the server.