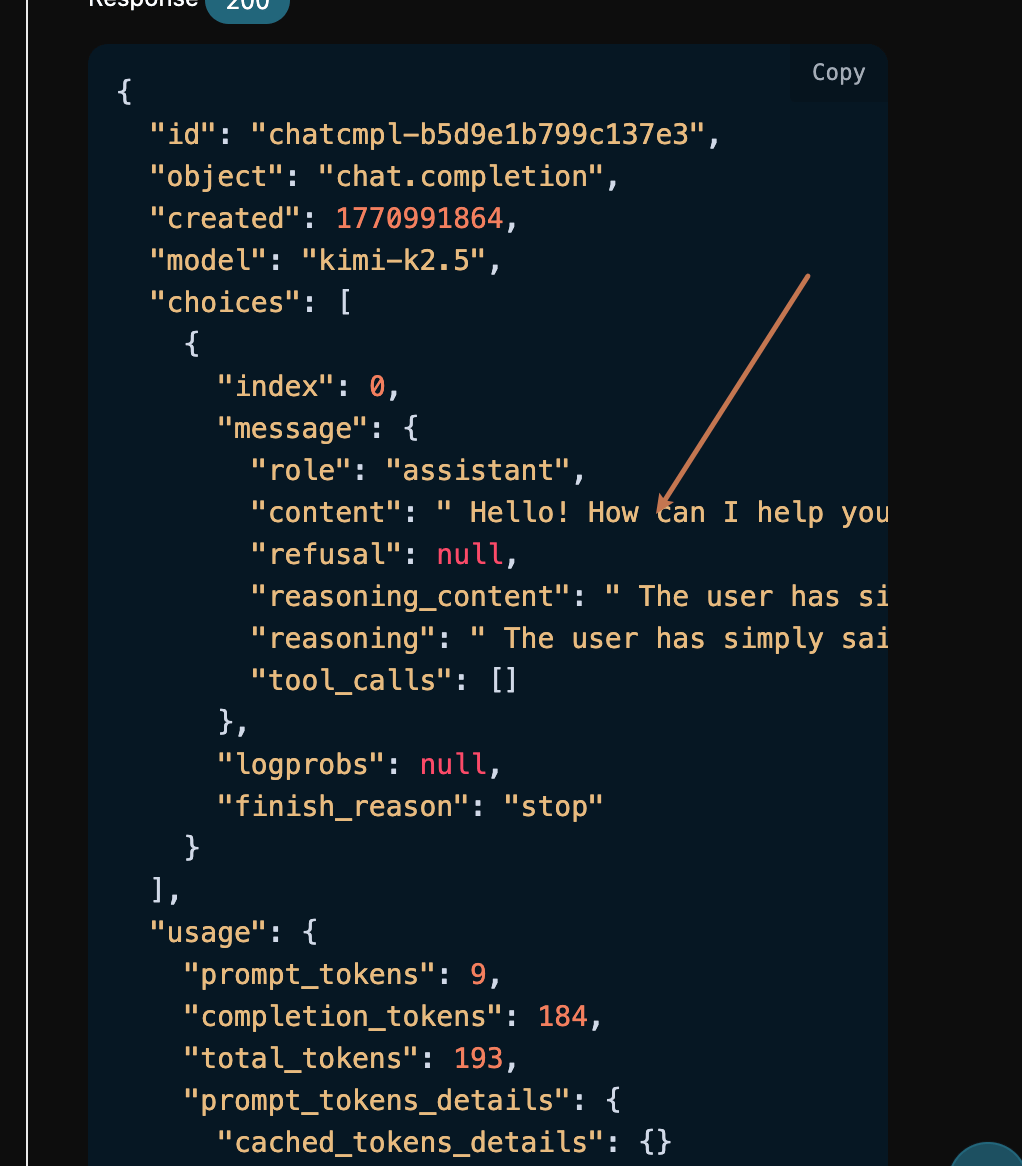

data: {"id": "chatcmpl-952dd5e75583c4d1", "object": "chat.completion.chunk", "created": 1770992031, "model": "kimi-k2.5", "system_fingerprint": null, "choices": [{"index": 0, "delta": {"content": "", "role": "assistant"}, "logprobs": null, "finish_reason": null}], "usage": null}

data: {"id": "chatcmpl-952dd5e75583c4d1", "object": "chat.completion.chunk", "created": 1770992031, "model": "kimi-k2.5", "system_fingerprint": null, "choices": [{"index": 0, "delta": {"reasoning_content": " 這", "reasoning": " 這"}, "logprobs": null, "finish_reason": null}], "usage": null}

data: {"id": "chatcmpl-952dd5e75583c4d1", "object": "chat.completion.chunk", "created": 1770992031, "model": "kimi-k2.5", "system_fingerprint": null, "choices": [{"index": 0, "delta": {"reasoning_content": " 使用者說了「你好」。這是一個簡單的問候", "reasoning": " 使用者說了「你好」。這是一個簡單的問候"}, "logprobs": null, "finish_reason": null}], "usage": null}

data: {"id": "chatcmpl-952dd5e75583c4d1", "object": "chat.completion.chunk", "created": 1770992031, "model": "kimi-k2.5", "system_fingerprint": null, "choices": [{"index": 0, "delta": {"reasoning_content": "。我應該以友好、熱情的方式回應", "reasoning": "。我應該以友好、熱情的方式回應"}, "logprobs": null, "finish_reason": null}], "usage": null}

data: {"id": "chatcmpl-952dd5e75583c4d1", "object": "chat.completion.chunk", "created": 1770992031, "model": "kimi-k2.5", "system_fingerprint": null, "choices": [{"index": 0, "delta": {"reasoning_content": "。由於這是對話的開始,", "reasoning": "。由於這是對話的開始,"}, "logprobs": null, "finish_reason": null}], "usage": null}

data: {"id": "chatcmpl-952dd5e75583c4d1", "object": "chat.completion.chunk", "created": 1770992031, "model": "kimi-k2.5", "system_fingerprint": null, "choices": [{"index": 0, "delta": {"reasoning_content": " 我應該問我今天能如何幫助他們。\n\n", "reasoning": " 我應該問我今天能如何幫助他們。\n\n"}, "logprobs": null, "finish_reason": null}], "usage": null}

data: {"id": "chatcmpl-952dd5e75583c4d1", "object": "chat.completion.chunk", "created": 1770992031, "model": "kimi-k2.5", "system_fingerprint": null, "choices": [{"index": 0, "delta": {"reasoning_content": "讓我來構思一個回應:\n- 確認這個", "reasoning": "讓我來構思一個回應:\n- 確認這個"}, "logprobs": null, "finish_reason": null}], "usage": null}

data: {"id": "chatcmpl-952dd5e75583c4d1", "object": "chat.completion.chunk", "created": 1770992031, "model": "kimi-k2.5", "system_fingerprint": null, "choices": [{"index": 0, "delta": {"reasoning_content": " 問候\n- 提供協助\n- 保持溫暖", "reasoning": " 問候\n- 提供協助\n- 保持溫暖"}, "logprobs": null, "finish_reason": null}], "usage": null}

data: {"id": "chatcmpl-952dd5e75583c4d1", "object": "chat.completion.chunk", "created": 1770992031, "model": "kimi-k2.5", "system_fingerprint": null, "choices": [{"index": 0, "delta": {"reasoning_content": " 和專業\n\n像這樣:\"你好!我", "reasoning": " 和專業\n\n像這樣:\"你好!我"}, "logprobs": null, "finish_reason": null}], "usage": null}

data: {"id": "chatcmpl-952dd5e75583c4d1", "object": "chat.completion.chunk", "created": 1770992031, "model": "kimi-k2.5", "system_fingerprint": null, "choices": [{"index": 0, "delta": {"reasoning_content": " 能如何幫助你今天?\"或\"嗨,", "reasoning": " 能如何幫助你今天?\"或\"嗨,"}, "logprobs": null, "finish_reason": null}], "usage": null}

data: {"id": "chatcmpl-952dd5e75583c4d1", "object": "chat.completion.chunk", "created": 1770992031, "model": "kimi-k2.5", "system_fingerprint": null, "choices": [{"index": 0, "delta": {"reasoning_content": "! 我能為你做什麼?\"\n\n其實", "reasoning": "! 我能為你做什麼?\"\n\n其實"}, "logprobs": null, "finish_reason": null}], "usage": null}

data: {"id": "chatcmpl-952dd5e75583c4d1", "object": "chat.completion.chunk", "created": 1770992031, "model": "kimi-k2.5", "system_fingerprint": null, "choices": [{"index": 0, "delta": {"reasoning_content": ",根據上下文,這似乎是", "reasoning": ",根據上下文,這似乎是"}, "logprobs": null, "finish_reason": null}], "usage": null}

data: {"id": "chatcmpl-952dd5e75583c4d1", "object": "chat.completion.chunk", "created": 1770992031, "model": "kimi-k2.5", "system_fingerprint": null, "choices": [{"index": 0, "delta": {"reasoning_content": " 一個一般的對話開場白。我會保持簡單並", "reasoning": " 一個一般的對話開場白。我會保持簡單並"}, "logprobs": null, "finish_reason": null}], "usage": null}

data: {"id": "chatcmpl-952dd5e75583c4d1", "object": "chat.completion.chunk", "created": 1770992031, "model": "kimi-k2.5", "system_fingerprint": null, "choices": [{"index": 0, "delta": {"reasoning_content": " 開放式以鼓勵他們分享他們的需求", "reasoning": " 開放式以鼓勵他們分享他們的需求"}, "logprobs": null, "finish_reason": null}], "usage": null}

data: {"id": "chatcmpl-952dd5e75583c4d1", "object": "chat.completion.chunk", "created": 1770992031, "model": "kimi-k2.5", "system_fingerprint": null, "choices": [{"index": 0, "delta": {"content": " 你好!我能幫助", "reasoning_content": " 幫助。 ", "reasoning": " 幫助。 "}, "logprobs": null, "finish_reason": null}], "usage": null}

data: {"id": "chatcmpl-952dd5e75583c4d1", "object": "chat.completion.chunk", "created": 1770992031, "model": "kimi-k2.5", "system_fingerprint": null, "choices": [{"index": 0, "delta": {"content": " 你今天嗎?"}, "logprobs": null, "finish_reason": "stop"}], "usage": null}

data: {"id": "chatcmpl-952dd5e75583c4d1", "object": "chat.completion.chunk", "created": 1770992031, "model": "kimi-k2.5", "system_fingerprint": null, "choices": [], "usage": {"prompt_tokens": 9, "completion_tokens": 135, "total_tokens": 144, "prompt_tokens_details": {"cached_tokens_details": {}}, "completion_tokens_details": {}}}

data: [DONE]

如果你尚未登入或註冊,會自動跳轉到登入頁面邀請您來註冊和登入,登入註冊之後會自動返回當前頁面。

在首次申請時會有免費額度贈送,可以免費使用該 API。

如果你尚未登入或註冊,會自動跳轉到登入頁面邀請您來註冊和登入,登入註冊之後會自動返回當前頁面。

在首次申請時會有免費額度贈送,可以免費使用該 API。